Conceptual Project Showcase

Throughout my time earning an M.Des in Interaction Design from the University of Washington, I worked on multiple cross-disciplinary design teams, where we followed our unique interests to design speculative products and services.

The following showcase features conceptual projects exploring embedded AI functionality, new gestural UI patterns, and innovation in healthcare platforms.

My role on these cross-disciplinary teams varied, but was commonly the lead Product Designer or UI/UX Designer, but also spanned UX Research, Visual Design, and Product Strategy.

Projects Included

AI Educational Workspace (February - March 2023) See Project Page ↗

Google Sign (January - February 2023) See Project Page ↗

TriSimpli Clinical Trial Matching Platform (January - March 2022) See Project Page ↗

Role

Product (UI/UX) Designer

Timeline

September 2021 - June 2023

Deliverables

Web/Desktop User Experience

Native App Experience

Competitive Research

UX Research Deliverables

AI Educational Workspace

A conceptual learning management platform that imagines AI as a responsible and helpful tool that enriches the learning experience.

This project speculates about the growing importance for students to develop critical thinking skills and a process-driven approach involved in completing assignments, rather than to spend time actually producing the output.

I was the lead Product and Visual Designer for this project, responsible for the interactive prototype, UX artifacts such as wireframes, the demo video's script and storyboarding, competitive analysis and research, and strategy.

Background & Challenge

Recent advancements in AI’s ability to source information, generate ideas, and write based on style and content prompts have disrupted education.

Seven to 10 years in the future, AI will be even more ubiquitous and powerful in its ability to automate gruntwork.

How might we create a learning management platform that responsibly integrates with AI to create a robust, yet semi-automated learning experience?

Research and framework-building determined elements of the core project direction and informed product functionality.

Building upon desk and competitive research, I led the team's efforts in developing three directions for our core product framework, based around audience and areas of opportunity.

The Research Journey focused on helping students learn deeply using AI.

Checkpoint Learning aimed to create more personalized learning experiences for students.

Automation for Teachers envisioned AI tools to reduce grunt-work.

After deliberating about each direction's potential for high impact, our team chose to focus on the Research Journey concept, and conducted ideation activities to determine core UI functionality.

We focused on three main product features: a search tray to collect information, a Mind Map connecting it with annotations, and AI automations and recommendations.

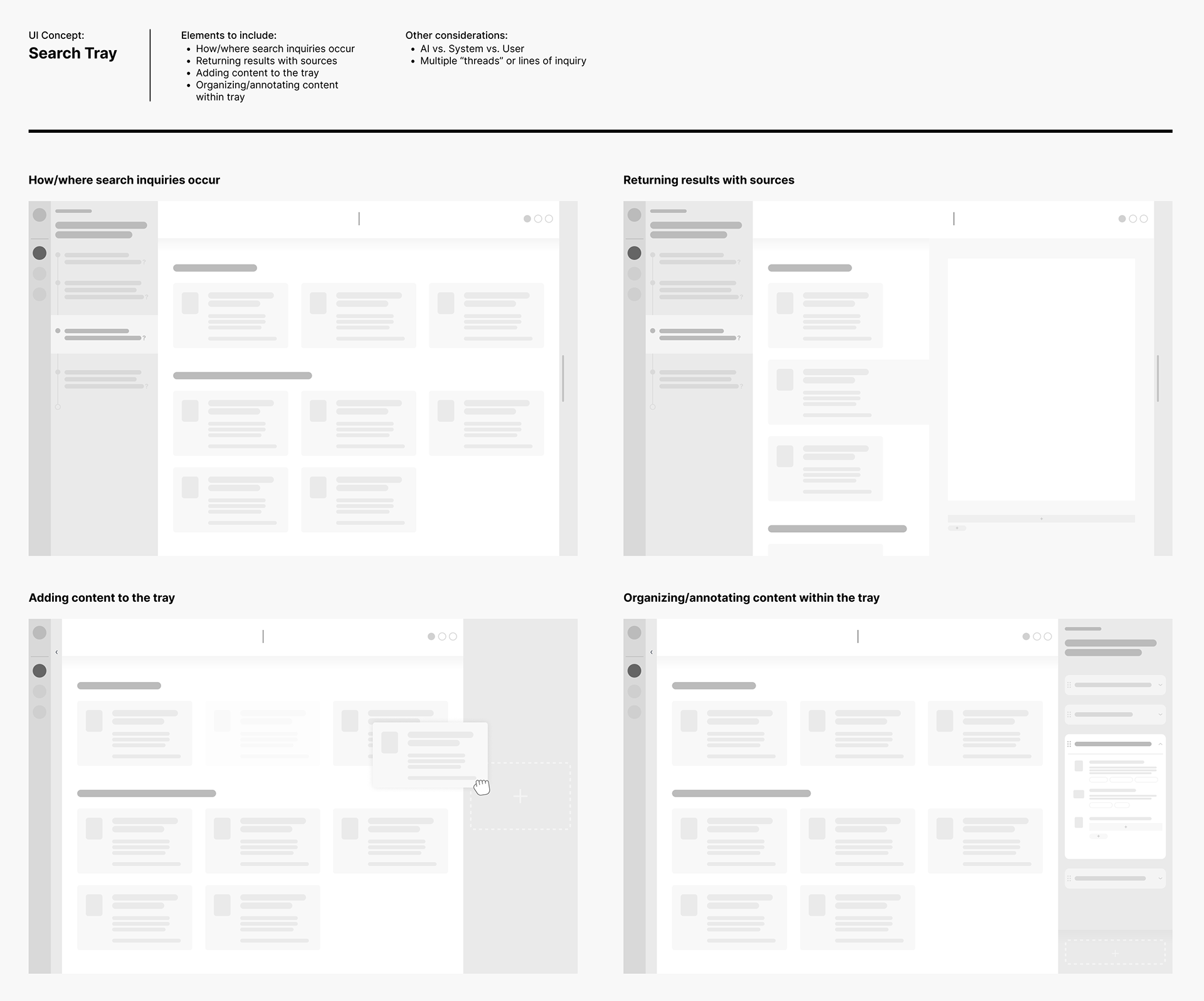

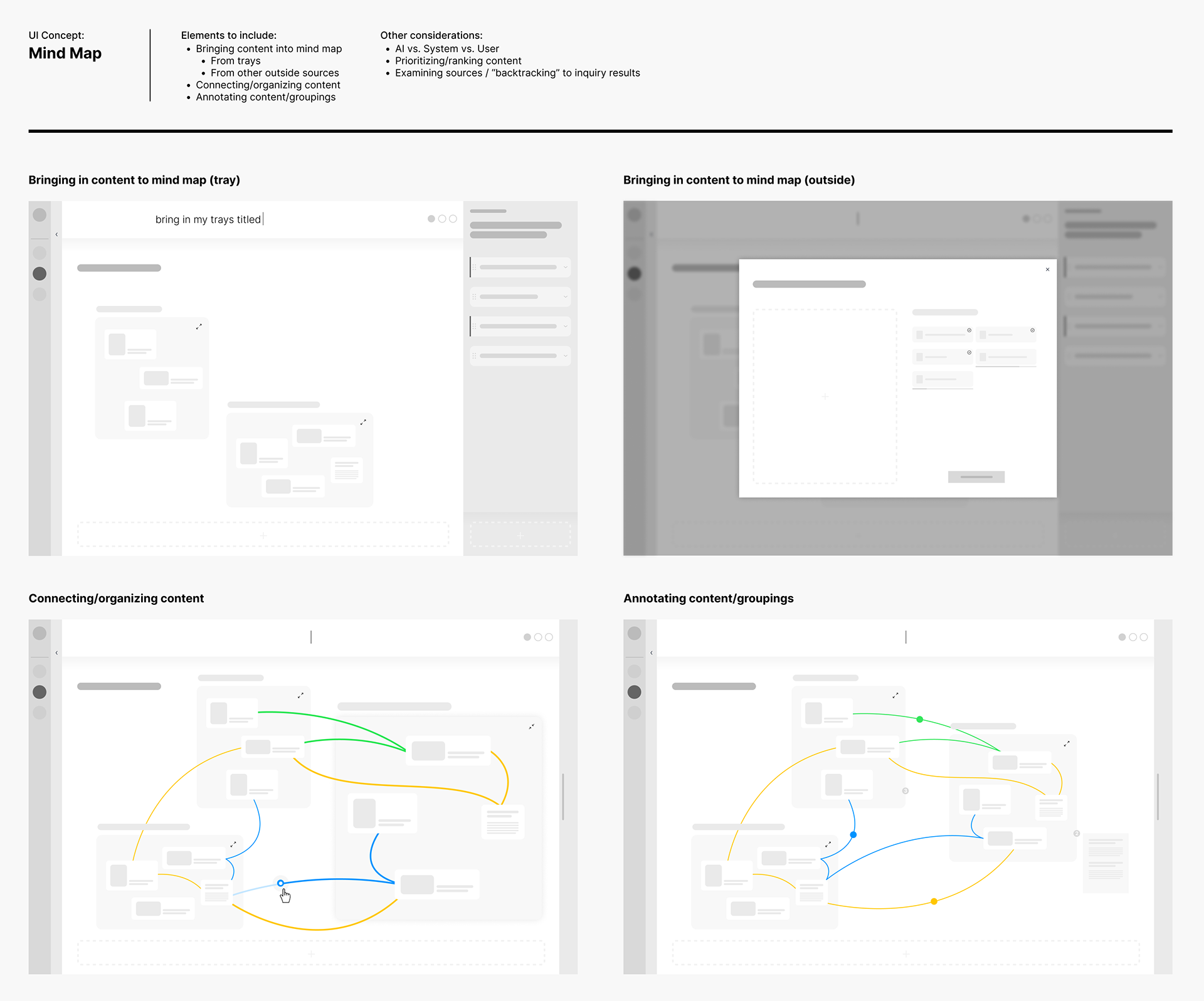

I created wireframes to visualize how each feature might function.

For the Search Tray, wireframes explored how and where search inquiries occur, how results are returned, how content is added, and how it is organized and annotated.

Mind Map wireframes explored bringing content from the Search Tray to the Mind Map, connecting and prioritizing relevant information, creating descriptors, and AI’s presence.

Our mid-fidelity designs responded to feedback on the wireframes, as well as explored key interaction modes like dragging, connecting, and selecting.

I built interactive prototypes while simultaneously exploring UI details at a high-polish level.

Applying typographic and visual design nuances increased the level of detail and polish in mid-fidelity mockups. I created custom component designs, based on the product design system, to achieve a more detailed communication of market updates for users.

We explored flexible bubbles for grouped content, color coding for AI and user interaction, and consistent circular treatments for content — with three varying sizes based on user-determined priority.

These prototypes informed a deeper level of micro-interactivity in high-fidelity prototypes for the final concept video.

Next, I created micro-interactions and animation to bring the design to life in high-fidelity prototypes.

For both native app and desktop web, I built in horizontal scroll for content cards, varied visual states for interactive elements, expanding and collapsing menus, and graphic animations.

During this stage, I was heavily involved in the concept video's storyboard, final script, and creative direction.

Google Sign

The conceptual design of an intuitive, effective, and fun search experience based on gestures.

Those with hearing impairments, who use a sign language as their primary language, experience language barriers and challenges when using text-based search. In other words, for signers, using text is like communicating in an entirely different language, and creates frustrating barriers to natural, intuitive articulation.

Recent technological advancements in sign language video translation have exposed an opportunity to make search more accessible and intuitive by enabling gestural, video-based input.

TriSimpli Clinical Trial Matching

A two-sided marketplace that matches Parkinson's patients to clinical trials that meet their criteria.

10 million people worldwide are living with Parkinson's, a debilitating neurodegenerative disease that currently has no cure. Clinical research trials provide patients with a sense of hope, and the opportunity to take part in cutting-edge research and treatments.

However, 80% of clinical trials fail to meet enrollment requirements, forcing the research to a halt. Though patients are interested in participating, there is no single efficient and effective way for finding fitting clinical trials.